LiDAR is a core sensor used in autonomous systems to help machines understand their surroundings in three dimensions. It plays a central role in perception tasks, such as object detection and localization. However, as these systems move into dense urban environments, several LiDAR perception challenges affect how reliably models can detect and interpret such scenarios.

City scenes introduce frequent occlusions, high traffic density, reflective infrastructure, and constantly changing road activity. These factors make it more difficult to maintain perception performance at scale.

Part of this performance gap also comes from how perception systems are trained and evaluated. Early benchmarks like the KITTI Dataset helped accelerate progress in LiDAR-based detection. But they do not fully capture the complexity of modern urban environments.

This article explains why LiDAR perception models often plateau in urban settings and how perception teams can improve model performance through better scenario coverage and dataset quality.

Why City Environments are Hard for LiDAR Perception Models

LiDAR perception systems exhibit good performance in structured environments, such as highways, and on benchmark datasets. However, dense urban environments introduce several LiDAR perception challenges in which scenes are dynamic and only partially visible.

Some of these challenges include:

- Occlusions Reduce Object Visibility: Urban traffic creates frequent partial visibility conditions. Vehicles stop close to one another at intersections, and large vehicles often block smaller road users, such as pedestrians, from view. When only a small portion of an object appears in the point cloud, detection models receive incomplete shape information. This makes classification less stable and increases the chance of missed detections.

- Distant and Small Objects are Harder to Detect: Traditional LiDAR sensors produce fewer returns as distance increases. This naturally reduces point cloud detail for faraway objects. Road users like cyclists and scooter riders already occupy limited space in the scene. When they appear at longer ranges, their representation becomes even weaker. Models may detect them later than expected or confuse them with background structures.

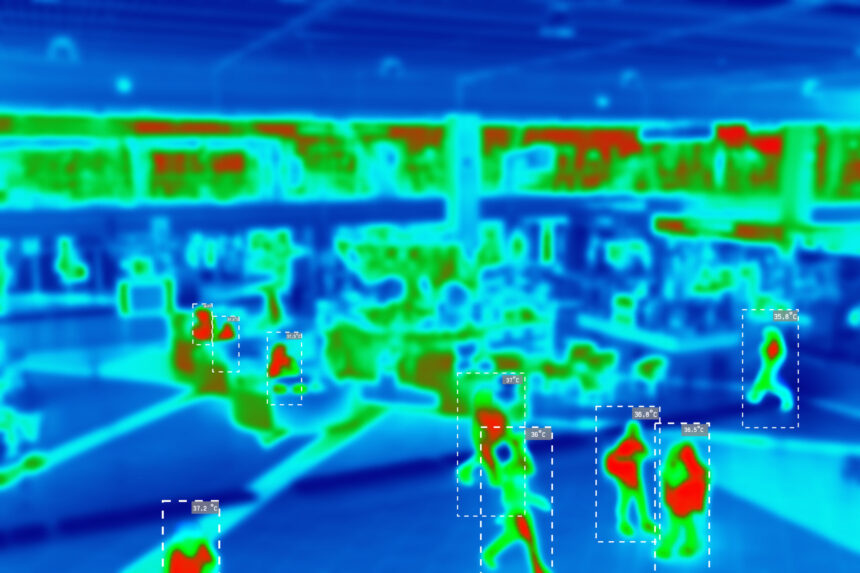

- Reflective Surfaces and Adverse Weather Introduce Signal Noise: Glass buildings, metal barriers, and polished vehicle surfaces can alter how LiDAR signals are reflected to the sensor. These effects subtly change the structure of the point cloud. Weather conditions introduce another layer of difficulty. Rain and fog scatter laser pulses before they return to the sensor. This reduces signal clarity and makes object boundaries harder to define. For example, under thick fog conditions with a visibility distance of 50 m or less, the number of returned LiDAR points (NPC) can decrease by up to 59%. This loss of point density reduces detection consistency and makes perception less reliable.

- Combining LiDAR with Cameras and Radar Increases System Complexity: Most production perception systems rely on multiple sensors rather than LiDAR alone. Cameras capture texture and color. Radar provides strong distance estimates in difficult visibility conditions. These sensors improve overall perception performance, but they must remain aligned in time and space. Even small calibration differences can reduce the quality of fused perception outputs. Tools like iMerit’s 3D Multi-Sensor Data Labeling Tool help align LiDAR, camera, and radar data more accurately and make labeling more consistent.

These challenges are not only caused by the driving environment itself. They are also connected to how LiDAR perception models are trained. When complex urban situations are missing or unevenly represented in training datasets, models get fewer chances to learn how to handle them.

Training Data Gaps that Slow Progress

Many LiDAR perception challenges become harder to address when datasets do not fully capture the complexity of real-world city environments.

Three types of training data gaps often limit progress.

1. Rare Urban Scenarios

Perception models improve through repeated exposure to real driving situations. However, many safety-critical events occur less frequently than standard traffic scenes. As a result, they appear less often in training datasets.

Examples include:

- Temporary road layouts near construction zones.

- Pedestrians are moving unpredictably between vehicles.

- Emergency vehicles are approaching from outside the main traffic flow.

- Micro-mobility vehicles moving through narrow gaps in traffic.

These situations introduce uncertainty into the scene. If they are underrepresented in training data, detection performance can become less reliable when they occur in production environments.

2. Dataset Coverage Gaps

Dataset scale alone does not guarantee perception improvement. Scene diversity plays a more important role in urban environments.

Many perception datasets contain large numbers of highway sequences or structured driving conditions. Dense intersections, roadside activity zones, and mixed-traffic environments are often less represented by comparison. This imbalance makes it harder for models to generalize across complex city scenes.

Improving dataset coverage across different urban layouts helps perception systems handle variation more consistently.

3. Annotation Quality

High-quality annotation is essential for reliable LiDAR perception training. Even small inconsistencies in labeling can affect how models interpret objects and motion across frames.

Common dataset issues include:

- Inconsistent labeling across similar object categories.

- Limited taxonomy detail for complex urban settings.

- Alignment gaps between LiDAR and camera data.

- Missing tracking continuity across frame sequences.

These problems reduce the quality of training signals available to perception models. Over time, they can slow performance improvements even when the dataset size increases.

iMerit helps fill dataset gaps and improve model reliability in dense urban environments through its 3D point cloud annotation services. iMerit offers various LiDAR data labeling services, including semantic annotation, 3D cuboid/box annotation, landmark annotation, polygon annotation, and polyline annotation.

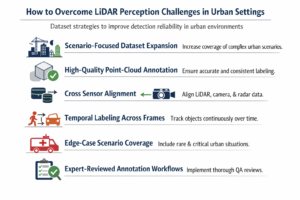

How to Overcome LiDAR Perception Challenges in Urban Settings

Improving LiDAR perception performance in dense urban environments requires changes at the dataset level.

The following dataset strategies help perception teams improve detection reliability in complex urban traffic scenarios.

1. Scenario-Focused Dataset Expansion

Increase dataset representation for environments where LiDAR perception models struggle. Focus especially on dense intersections, construction zones, roadside activity areas, and night driving conditions.

Prioritize sequences where objects appear gradually from behind parked vehicles or move through partially visible areas of the scene. These situations help models learn how objects behave when visibility changes across frames.

Also, add traffic situations that involve lane shifts or temporary barriers. It helps improve detection stability in environments that differ from structured roadway layouts.

2. High-Quality Point-Cloud Annotation

Improve LiDAR annotation consistency across object classes before increasing dataset scale. Detection accuracy depends on how clearly objects are defined inside the point cloud.

Maintain consistent object definitions across vehicle classes and vulnerable road users. Apply semantic segmentation carefully so that background structure does not interfere with the learning of foreground objects. For more on this, see how it improves object boundary accuracy in autonomous systems.

When 3D cuboids are used for detection training, keep placement stable across sequences rather than adjusting annotation style between batches. These steps help models build stronger spatial relationships between nearby objects in dense traffic scenes.

3. Cross Sensor Alignment

Check spatial alignment between sensors before training multimodal perception models. Small calibration shifts between LiDAR and camera frames can introduce object position errors during fusion.

Maintain timestamp consistency across sensors when building training sequences. Also, verify that radar distance estimates align with LiDAR object placements across frames.

Improved cross-sensor alignment supports stronger fusion performance in dense traffic environments, where a single sensor may lose visibility.

4. Temporal Labeling Across Frames

Urban perception depends on understanding how objects move through a scene over time. Yet labeling frames independently limits the model’s ability to learn motion continuity.

Temporal labeling addresses this by allowing models to track objects across frames rather than treating each frame in isolation. Pedestrians, cyclists, and vehicles are followed throughout sequences, maintaining identity even during brief occlusions behind larger obstacles. This continuity not only improves motion prediction but also enables models to recover objects that temporarily disappear, which is valuable in busy intersections.

5. Edge-Case Scenario Coverage

Add targeted examples of rare but high-impact urban driving situations. These scenes appear less frequently in standard datasets but strongly influence the reliability of perception during deployment.

Examples include emergency vehicle movement through traffic, sudden pedestrian crossings near parked vehicles, and micro-mobility traffic moving between lanes.

6. Expert-Reviewed Annotation Workflows

Maintain annotation consistency as datasets scale by introducing structured review stages during production. Quality drift often appears when multiple teams label complex urban scenes without shared validation checkpoints.

Apply multi-stage QA workflows across annotation batches to verify class boundaries, sensor alignment, and tracking continuity across frame sequences.

Human reviewers are also important for handling edge cases that automated pipelines often miss, especially in partial visibility conditions and dense intersections.

How iMerit Improved Training Data for an Autonomous Vehicle (AV) Perception Pipeline

An autonomous vehicle company partnered with iMerit to enhance its 3D perception stack by incorporating improved LiDAR and camera-based data. They required consistent annotations across various model inputs to perform object detection, lane understanding, and scene interpretation.

The project focused on improving annotation coverage across both 2D camera frames and 3D point cloud scenes. This included targets such as traffic signs, lane boundaries, road structure, and dynamic objects in LiDAR frames. Human-in-the-loop workflows were used to maintain consistency across sequences and support reliable labeling in dense traffic conditions.

As part of the dataset preparation process, iMerit’s annotation team worked on:

- Labeling objects across LiDAR scenes and camera frames.

- Annotating lane markings and road boundaries across sensor views.

- Improving dataset coverage across different road types and lighting conditions.

- Maintaining annotation consistency using structured review stages.

iMerit helped the company improve the quality of the training dataset for AV perception and supported stronger depth estimation, object detection, and scene understanding across the perception stack.

Conclusion

Urban environments often reveal LiDAR perception challenges earlier than controlled benchmark settings. The sensor itself remains essential for 3D scene understanding, but continued performance gains now depend more on how urban datasets are selected, labeled, and validated across complex traffic conditions.

Key Takeaways

- Occlusions and dense traffic reduce visibility and make object detection less stable in city scenes.

- Scenario diversity improves model behavior more than simply increasing dataset size.

- Temporal labeling helps models recover objects during short visibility gaps.

- Sensor alignment between LiDAR, camera, and radar supports more reliable scene interpretation.

- Review-driven annotation workflows help maintain consistency as datasets scale.

iMerit helps perception teams improve LiDAR performance in urban environments through high-quality 3D point cloud annotation and multimodal alignment. With expert-guided quality workflows, teams can expand scenario coverage, maintain labeling consistency across sequences, and support more reliable perception in complex city driving.

Talk to an iMerit expert today to learn how you can improve the performance of your LiDAR perception models.