For several years, AI strategy was largely a benchmarking exercise. Organizations compared foundation model providers, invested in fine-tuning pipelines, and tracked incremental improvements in reasoning, perception, and multimodal performance. During that period of rapid capability growth, selecting a stronger model often translated directly into measurable gains, so it was natural and correct for model choice to sit at the center of most AI investment decisions.

That logic is losing its edge. High-performing models are now widely accessible through commercial APIs and open-source ecosystems, and while meaningful performance differences still exist, they are narrower across most enterprise applications than they were even two years ago. Integration tooling, deployment infrastructure, and monitoring systems have similarly matured and standardized. The result is that access to a capable model is no longer a differentiating condition; it’s closer to a baseline. Long-term competitive advantage is shifting toward something less visible and harder to replicate: how these systems are actually operated after they go live.

What Benchmark Performance Can and Cannot Tell You

Controlled evaluation provides genuine value. Validation datasets are curated, criteria are clearly defined, and performance is measured under stable conditions, making benchmark results a reasonable basis for model selection and initial validation.

The limitation is that benchmark performance represents a snapshot taken under circumstances that production environments rarely resemble for long. Once an AI system is deployed into a live setting, it begins interacting with evolving data patterns, expanding operational workflows, and human review processes that are far less static than anything in a validation suite. New user behaviors emerge. Rare or long-tail cases appear more frequently than anticipated. Reviewer teams scale, sometimes rapidly, across multiple regions or vendors; guidelines written during early validation often encounter situations that were never explicitly defined at launch.

These changes don’t typically produce immediate or obvious failure. Instead, they introduce gradual variation, inter-reviewer disagreement increases in specific categories, escalation rates shift, and certain cases get resolved inconsistently across teams. Without structured monitoring, these variations accumulate quietly, influencing retraining datasets, distorting evaluation metrics, and eroding overall system reliability. The instability originates in the operational layer surrounding the model, not in the model itself.

How Operational Gaps Develop in Practice

Operational gaps rarely announce themselves. They tend to emerge slowly through processes that look functional on the surface, which is precisely what makes them difficult to detect without deliberate measurement.

1. Healthcare Imaging

A model may achieve strong validation performance within a centralized testing process. After deployment across multiple hospital systems, subtle differences in labeling practices begin to develop, as one institution classifies borderline findings more conservatively than another, based on local clinical norms or reviewer tendencies that were never explicitly documented. If calibration sessions are infrequent and inter-annotator agreement isn’t tracked systematically across sites, these differences persist and compound. As updated datasets incorporate regionally varied labels, training signals begin to diverge from what the original validation process established, in ways that are difficult to trace back to their source.

2. Autonomous Mobility

Perception and mapping systems depend heavily on consistent annotation standards across large, distributed teams. When those teams operate across different geographies, minor differences in how rare or ambiguous road scenarios are labeled can accumulate into meaningful inconsistency. Without periodic cross-team recalibration and structured quality audits, these variations propagate into simulation testing environments and influence downstream performance, often only becoming apparent well after the decisions that caused them were made.

3. Generative AI Evaluation

Guideline documents written during early development cannot anticipate every ambiguous or novel prompt that emerges in production at scale. Reviewers must interpret unfamiliar scenarios and make judgment calls, and if those decisions aren’t captured, reviewed, and standardized, disagreement increases over time. When disagreement is averaged rather than analyzed, the underlying ambiguity goes unresolved and becomes embedded in evaluation data, quietly shaping what the system learns about quality.

None of these are model failures, and none of them show up clearly in benchmark results. All of them are operational failures that develop gradually in the space between validation and sustained production use.

What Operational Maturity Actually Requires

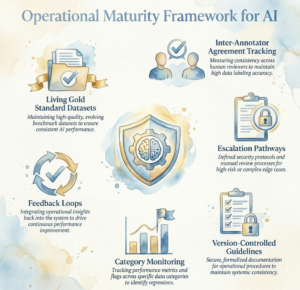

Operational maturity is the set of systems and practices that prevent these gradual shifts from undermining reliability at scale. It is built from specific, measurable components rather than from a broad organizational commitment to quality.

- Living gold standard datasets that regularly test reviewer alignment against clearly defined criteria, rather than treating initial validation benchmarks as permanent references.

- Inter-annotator agreement is tracked by category and over time so that emerging divergence is visible and actionable before it becomes systemic.

- Clearly defined escalation pathways so that ambiguous or high-risk cases are reviewed consistently and documented appropriately across teams, rather than resolved through informal judgment that varies by reviewer or region.

- Version-controlled guideline updates with clear visibility into when changes were introduced and how they affected evaluation outcomes, both for internal quality control and to meet audit requirements in regulated domains.

- Category-level monitoring to detect clusters of disagreement or shifts in escalation frequency before they become embedded in downstream data.

- Feedback loops that connect evaluation outcomes directly to retraining priorities, so that evaluation functions as part of a continuous improvement cycle rather than a one-time validation step.

This is what separates organizations that maintain performance stability over time from those that find themselves debugging drift they can’t easily explain.

Why This Shift Is Accelerating

Several converging trends are making operational maturity more central to AI strategy. As AI differentiation increasingly depends on sustained operations rather than model selection alone, the relative impact of marginal benchmark improvements continues to shrink. Model performance across major providers has become sufficiently strong for a wide range of enterprise use cases, which shifts attention toward sustained reliability. At the same time, AI systems are increasingly embedded in workflows that carry real consequences for clinical decisions, vehicle behavior, financial risk assessments, and customer-facing interactions, where consistency over time matters more than peak performance observed in controlled testing.

Regulatory expectations are expanding in parallel. Organizations operating in healthcare, financial services, autonomous systems, and other regulated domains must now demonstrate traceability, audit readiness, and defensible review processes as a condition of continued operation. The ability to explain how outputs were evaluated, how disagreements were resolved, and how guidelines evolved has become part of what makes an AI system credible and defensible to external scrutiny.

Under these conditions, two organizations can deploy comparable models and begin with nearly identical validation metrics, yet find themselves in very different positions six to twelve months later. One maintains consistent reviewer alignment, structured escalation, and measurable quality monitoring. The other relies on guidelines that haven’t been updated since launch and review processes that have never been formally audited. The divergence in long-term stability reflects operational design, and it compounds over time in ways that become increasingly difficult and expensive to reverse.

What This Means for Enterprise AI Teams

For organizations building or scaling AI programs, AI deployment should be understood as the beginning of a continuous operational lifecycle rather than the conclusion of a development process. Sustained performance requires structured calibration routines, measurable disagreement tracking, clear adjudication processes, documented guideline evolution, and feedback mechanisms that connect evaluation outcomes to retraining priorities. These aren’t add-ons to a mature AI program; they are what a mature AI program is built from.

As model access becomes more standardized across the industry, the teams that invest in disciplined human-in-the-loop workflows, structured quality controls, and governance-ready oversight systems will hold an advantage that compounds over time, because operational maturity is built through practice, documentation, and institutional knowledge that can’t simply be acquired through a better API call. The broader shift from model-centric AI differentiation toward operational discipline reflects AI’s evolution from experimental capability into embedded infrastructure, and as that transition continues, how systems are operated will matter as much as how they were built.

Conclusion: Operational Excellence Is the New Competitive Moat

The organizations that will lead in AI over the next several years are not necessarily those that access the best models first; they are the ones that have built the operational discipline to keep those models performing reliably at scale. Benchmark scores get you to AI deployment. What happens after is determined by the quality of your data pipelines, the consistency of your human-in-the-loop review processes, the rigor of your escalation and calibration workflows, and the feedback loops that connect evaluation back to improvement. That is where durable advantage is built, and where most organizations still have significant ground to cover.

This is precisely the space iMerit works in. Across healthcare AI, autonomous mobility, and generative AI evaluation, iMerit combines expert-led data annotation, structured quality operations, and purpose-built tooling through Ango Hub to help organizations maintain the consistency and traceability that production AI systems demand. Whether the challenge is inter-annotator alignment across distributed teams, RLHF pipelines that need to scale without drifting, or domain-specific annotation that requires genuine subject matter expertise, iMerit is built to operationalize exactly what this article describes, not as a one-time engagement, but as a sustained operational capability.

This operational strength is further reinforced by the iMerit Scholars program, which develops specialized talent from underserved communities into highly trained AI data experts, ensuring that consistency, domain expertise, and quality are built directly into the human layer of AI systems. Learn more.

If your AI program is at the stage where model selection is largely settled, and operational reliability is the next frontier, that’s a conversation worth having.