AI systems do not build themselves; they require human judgment to work well. Behind every successful chatbot or medical tool is a team of professionals, teachers, doctors, lawyers, and linguists who review outputs and correct mistakes. This work, known as AI training or human-in-the-loop, is a rapidly growing field where domain expertise matters more than coding skills. Whether you are labeling data, evaluating prompts, or applying specialized knowledge to complex tasks, the skills you already have are more relevant than you think. With focus and the right entry point, you can move from annotation work to high-impact roles like those in the iMerit Scholars Program.

The demand for AI training professionals is growing faster than the supply, and the majority of roles do not require a technical background. A AI training career is rapidly becoming one of the most expansive professional paths in the global technology economy.

This blog breaks down what AI training work actually looks like, what skills matter, how careers grow over time, and how you can take your first real step. Whether you are exploring a career change, looking to apply your professional expertise in a new way, or simply curious about what working in AI actually involves, this is your starting point.

What Is AI Training and Why Does It Need Humans?

When a company builds an AI model, the model does not automatically know what good looks like. It needs to be shown. It needs humans to review its responses and say: This answer is helpful, this one is not. This image is labeled correctly, that one is not. This translation sounds natural, that one is awkward.

That process of teaching, correcting, and evaluating is what an AI training career means in practice. It is not about coding or building the AI itself. It is about providing the human judgment that the AI cannot generate on its own.

This is sometimes called human-in-the-loop work, meaning humans are involved at key stages of the AI development process, not just at the beginning or the end, but continuously, as the model learns and improves.

The result is that AI companies need large numbers of thoughtful, reliable people who can do these AI evaluation tasks well. And the more specialized the AI, the more specialized the humans need to be.

What Does the Work Actually Look Like?

An AI training career is not one type of job. It covers a wide range of tasks depending on what kind of AI is being built and how far along it is. Here are the most common types of work, and what skills they draw on most.

Reviewing and rating AI responses

You read what the AI wrote and score it based on criteria like accuracy, helpfulness, tone, and safety. You might compare two responses and decide which is better, or explain in writing why one needs improvement. This draws heavily on analytical thinking, attention to detail, and clear written communication.

Data Labeling

You perform data labeling by looking at images, audio clips, videos, or pieces of text and tagging them with information the AI needs to learn from. This might mean identifying objects in a photo, transcribing speech, or categorizing written content. Consistency and the ability to apply guidelines accurately at volume are what matter most here.

Writing and evaluating prompts

You work with prompt and response pairs, crafting prompts for the AI and evaluating the responses against defined standards. This includes checking whether each response is accurate, relevant, well-structured, and aligned with user intent. Good prompt writing requires strong language skills and a clear sense of how real people ask questions, not how developers phrase them.

Chain of Thought Reasoning

Some tasks require you to work through a problem step by step alongside the model, correcting its reasoning at each stage rather than just rating the final answer. This is common in mathematics, logic, and complex decision-making tasks. It requires structured thinking and often subject matter knowledge or domain expertise.

Red Teaming

You deliberately try to break the model. You probe it with adversarial prompts, edge cases, and scenarios designed to expose where it fails, produces biased outputs, hallucinates facts, or generates harmful content. Finding these vulnerabilities before deployment is the entire point. Adversarial thinking and creativity are the core skills here.

Hallucinations and Bias Detection

You review model outputs specifically to identify where the AI is generating confident but incorrect information, or where its responses reflect bias. This requires careful reading and often domain expertise to catch errors that sound plausible on the surface.

Image and Video Generation Evaluation

You assess AI-generated images and videos for quality, accuracy, safety, and alignment with the prompt. This might mean checking whether a generated image reflects what was asked, whether it contains harmful content, or whether a video generation model is producing coherent results. Visual judgment and safety awareness are central to these AI evaluation tasks.

Agent Evaluation

AI agents take autonomous actions, whether booking travel, managing files, executing code, or interacting with other systems. Evaluating them means assessing whether the agent behaves as intended, handles unexpected situations safely, and does not take actions it should not. This requires systems thinking and the ability to reason about sequences of decisions, not just individual outputs.

Domain Specific Evaluation

At higher levels of an AI training career, you work as a domain expert, where your professional background becomes the primary qualification. A doctor reviewing medical AI outputs. A lawyer evaluating a legal reasoning model. A linguist assessing translations in a language that very few people speak fluently. Professional credentials and subject matter depth are what make this work possible.

What Skills Do You Actually Need?

The skills that matter most in AI training are not technical. They are the kinds of skills that transfer from almost any professional or academic background.

Attention to detail

AI training work requires consistency. A small error repeated across thousands of examples teaches the model the wrong thing. In practice, this means noticing when an AI response is almost right but subtly wrong, a fact that sounds plausible but is slightly off, a tone that is technically polite but would feel condescending to a real user. The model learns from every example you approve. If you let the almost right ones through, the model learns to be almost right.

Clear written communication

Much of the feedback you give is written. Saying “this response is bad” is not useful feedback. Saying “this response answers the wrong question and assumes context the user never provided” is.

Analytical thinking

You are often working in gray areas where the right answer is not obvious. Two AI responses might both be factually correct, but one buries the most important information at the end while the other leads with it. Recognizing that difference, and being able to explain why it matters, is analytical thinking applied to AI training.

Domain knowledge

This is what opens the door to higher level, higher paying work. A general reviewer might be able to tell that a medical response sounds wrong. A doctor can tell you exactly why it is wrong, which part of the reasoning failed, and what the correct answer should be.

Comfort with structured guidelines

Guidelines need to be understood deeply enough to apply correctly even when the situation is not covered. A guideline might say responses should be concise. But what if the user asked a genuinely complex question? Knowing that concise means no unnecessary words, not short at the expense of useful, is the kind of judgment that makes the difference.

Process Orientation

AI training work is structured and sequential. Tasks follow defined workflows, evaluation criteria are applied in a specific order, and quality depends on following that process consistently. People who naturally think in terms of process and procedure do well here.

Sustained Focus

Much of this work involves extended periods of careful, attentive evaluation. The ability to maintain concentration and quality over continuous hours of data labeling work, without rushing or cutting corners as the volume increases, is a real and valued skill in this field.

How AI Training Careers Grow Over Time

An AI training career is not a dead end. It is an entry point into one of the fastest-growing areas of the technology industry, with a clear career path for people who develop their skills and build a track record.

Starting out: AI Trainer or Annotator

Most people begin by completing data labeling or evaluation tasks according to established guidelines. The focus at this stage is on building speed, accuracy, and consistency. You learn how evaluation frameworks work, how to use annotation tools, and how to produce work that meets professional quality standards.

Moving up: Reviewer or Quality Analyst

With experience, many practitioners move into quality roles, reviewing other people’s work, flagging inconsistencies, and helping maintain the standards that model development depends on.

Going deeper: Domain Expert

With experience, many practitioners move into quality roles, reviewing other people’s work, flagging inconsistencies, and helping maintain the standards that model development depends on. This stage builds a much deeper understanding of what makes training data high quality and why it matters.

Leading: Project Lead or Data Operations Specialist

The most experienced practitioners move into roles that combine technical understanding with leadership, managing annotation teams, designing evaluation rubrics, working directly with AI development clients, and ensuring that entire data pipelines meet the quality standards required for production AI.

Beyond these stages, the skills built in AI training transfer well into adjacent careers in AI product management, data operations, AI ethics, and model development itself.

What Skills Each Role Actually Uses

| Task Type | Core Skills Needed |

| Reviewing and rating responses | Analytical thinking, written communication, and attention to tone and accuracy |

| Data Labeling | Consistency, guideline adherence, and sustained focus on volume |

| Writing and evaluating prompts | Language skills, understanding of user intent, process orientation |

| Chain of thought reasoning | Logical thinking, subject knowledge, ability to identify flawed reasoning steps |

| Red teaming | Adversarial thinking, creativity, and understanding of model failure modes |

| Hallucination and bias detection | Critical reading, domain knowledge, and pattern recognition |

| Image and video generation evaluation | Visual judgment, understanding of prompt alignment, and safety awareness |

| Agent evaluation | Systems thinking, the ability to assess sequential decisions, and edge case identification |

| Domain-specific evaluation | Professional credentials, domain expertise, high stakes judgment |

Industries Hiring AI Training Professionals

AI training roles exist across a wide range of industries. These are the sectors creating the most significant and sustained demand right now.

Language and communication: Every AI that processes or generates language needs humans to evaluate it. Multilingual professionals are especially valuable, since assessing a model’s outputs in a language requires genuine fluency. If you speak more than one language, particularly one that is underrepresented in AI development, that is a real competitive advantage.

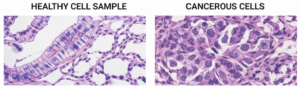

Healthcare: Healthcare or Medical AI is one of the most active and consequential areas of AI development. The stakes of incorrect outputs are high, which means genuine clinical knowledge is not optional. Organizations like iMerit work with credentialed medical professionals through the iMerit Scholars program to ensure the human expertise shaping these systems is real and accountable.

Autonomous Vehicles and Robotics: The reliability demands of self-driving systems and industrial robots make this one of the most active hiring areas for AI training professionals. Companies building perception AI need specialists who understand real-world conditions and the kind of edge cases that standard datasets do not capture.

Frontier Model Development: The most advanced AI systems, the large foundation models that power products used by millions, require continuous AI evaluation tasks across every modality and language. This is some of the highest impact AI training work available, and demand from leading AI labs is ongoing.

Image and Video Generation: As generative AI systems become more capable and more widely deployed, the need for skilled human evaluators in this space is growing rapidly. Companies building these systems need people who can assess quality, safety, and alignment at scale.

Agent Training: AI agents that take autonomous actions in the real world represent one of the fastest growing areas of AI development. Evaluating agent behavior requires a different kind of thinking than evaluating text outputs, and demand for qualified evaluators in this space is still early but accelerating quickly.

Geospatial, mapping, and agriculture: AI systems that analyze satellite imagery, support precision agriculture, and power mapping applications require annotators who can work with spatial data, land use classification, and crop detection. iMerit has dedicated work in this area, and it represents a growing source of AI training roles for professionals with relevant domain knowledge.

Legal, financial, and professional services: AI is being built to assist with legal research, compliance, document review, and financial analysis. These systems require practitioners who understand the professional standards and nuances of those fields.

E-commerce and retail: Search relevance, product data enrichment, and content moderation for shopping platforms all require human evaluation at scale. This is one of the more accessible entry points into AI training work, with a large and ongoing volume of tasks.

Education and science: AI tutoring systems, research tools, and scientific models all require human experts to evaluate whether outputs are accurate, appropriate, and genuinely useful in their domain.

Spotlight: The iMerit Scholars Program

If you have advanced expertise in medicine, law, STEM, linguistics, or the humanities and you are looking for a serious, structured way to apply that expertise to AI development, the iMerit Scholars Program is one of the most rigorous paths available.

iMerit is one of the world’s leading AI data companies, working with major AI developers across healthcare, generative AI, and computer vision. The Scholars Program brings credentialed domain experts, including healthcare scholars and professionals from other disciplines, directly into the AI development process, not as isolated task workers, but as collaborators who reason through problems, provide structured feedback, and work iteratively with AI development teams.

What makes it different:

- Scholars hold graduate-level or higher qualifications, and the work is designed to match that depth.

- Scholars reason through problems, explain their thinking, and work through iterative feedback cycles that directly shape how a model behaves.

- Work is conducted through the Ango Deep Reasoning Lab, a platform built specifically for expert-level AI collaboration, not general crowd annotation.

- In a single engagement with a global consumer technology company, iMerit deployed more than 80 Scholars across mathematics, law, biology, linguistics, and economics, completing over 5,000 tasks across 30 types of reasoning.

The program offers flexible work, competitive compensation, and the opportunity to contribute to AI systems that affect millions of people.

How to Get Started

Start with what you already know. Your professional background, language skills, and domain expertise are all potentially relevant. Think about the field you work or study in and ask whether AI is actively being built there. It almost certainly is.

Get familiar with how evaluation tasks are structured. Many AI companies publish sample guidelines and evaluation rubrics publicly. Reading through a few gives you a realistic picture of the work and helps you develop the judgment that hiring teams look for.

Build familiarity with annotation tools. Platforms like Ango Hub and Labelbox are industry standards for data labeling.

Apply directly. The most direct path to an AI training career is through companies like iMerit that connect domain experts with AI development projects. The iMerit Scholars Program is specifically designed for this. Explore here.

Conclusion

Building an AI training career is one of the most accessible and genuinely impactful ways to build a career at the center of how modern AI actually gets built. It requires you to bring your judgment, your domain expertise, and your ability to perform complex AI evaluation tasks carefully and communicate clearly.

The professionals who will shape the next generation of AI systems are not all sitting in research labs. Many of them are doctors, teachers, lawyers, linguists, and graduates who realized that the knowledge they spent years building is exactly what AI development needs most.

If that sounds like you, the door is open. The iMerit Scholars Program is a strong place to start, and the work you do there will directly influence AI systems used by millions of people around the world.

Ready to apply your expertise to the world’s most advanced AI systems? Join us now!