A Tesla Optimus humanoid robot walks through a factory with people. Predictable robot behavior requires priority-based control and a legal framework. Credit: Tesla

Robots are becoming smarter and more predictable. Tesla Optimus lifts boxes in a factory, Figure 01 pours coffee, and Waymo carries passengers without a driver. These technologies are no longer demonstrations; they are increasingly entering the real world.

But with this comes the central question: How can we ensure that a robot will make the right decision in a complex situation? What happens if it receives two conflicting commands from different people at the same time? And how can we be confident that it will not violate basic safety rules—even at the request of its owner?

Why do conventional systems fail? Most modern robots operate on predefined scripts — a set of commands and a set of reactions. In engineering terms, these are behavior trees, finite-state machines, or sometimes machine learning. These approaches work well in controlled conditions, but commands in the real world may contradict one another.

In addition, environments may change faster than the robot can adapt, and there is no clear “priority map” of what matters here and now. As a result, the system may hesitate or choose the wrong scenario. In the case of an autonomous car or a humanoid robot, such a predictable hesitation is no longer just an error—it is a safety risk.

From reactivity to priority-based control

Today, most autonomous systems are reactive—they respond to external events and commands as if they were equally important. The robot receives a signal, retrieves a matching scenario from memory, and executes it, without considering how it fits into a larger goal.

As a result, predictable commands and events compete on the same level of priority. Long-term tasks are easily interrupted by immediate stimuli, and in a complex environment, the robot may flail, trying to satisfy every input signal.

Beyond such problems in routine operation, there is always the risk of technical failures. For example, during the first World Humanoid Robot Games in Beijing this month, the H1 robot from Unitree deviated from its optimal path and knocked a human participant to the ground.

A similar case had occurred earlier in China: During maintenance work, a robot suddenly began flailing its arms chaotically, striking engineers until it was disconnected from power.

Both incidents clearly demonstrate that modern autonomous systems often react without analyzing consequences. In the absence of contextual prioritization, even a trivial technical fault can escalate into a dangerous situation.

Architectures without built-in logic for safety priorities and management of interacts with subjects — such as humans, robots, and objects — offer no protection against such scenarios.

My team designed an architecture to transform behavior from a “stimulus-response” mode into deliberate choice. Every event first passes through mission and subject filters, is evaluated in the context of environment and consequences, and only then proceeds to execution. This enables robots to act predictably, consistently, and safely—even in dynamic and unpredictable conditions.

Two hierarchies: Priorities in action

We designed a control architecture that directly addresses predictable robotics and reactivity. At its core are two interlinked hierarchies.

1. Mission hierarchy — A structured system of goal priorities:

- Strategic missions — fundamental and unchangeable: “Do not harm a human,” “Assist humans,” “Obey the rules.”

- User missions — tasks set by the owner or operator

- Current missions — secondary tasks that can be interrupted for more important ones

2. Hierarchy of interaction subjects — The prioritization of commands and interactions depending on source:

- Highest priority — owner, administrator, operator

- Secondary — authorized users, such as family members, employees, or assigned robots

- External parties — other people, animals, or robots who are considered in situational analysis but cannot control the system

How predictable control works in practice

Case 1. Humanoid robot — A robot is carrying parts on an assembly line. A child from a visiting tour group asks it to hand over a heavy tool. The request comes from an external party. The mission is potentially unsafe and not part of current tasks.

- Decision: Ignore the command and continue work.

- Outcome: Both the child and the production process remain safe.

Case 2. Autonomous car — A passenger asks to speed up to avoid being late. Sensors detect ice on the road. The request comes from a high-priority subject. But the strategic mission “ensure safety” outweighs convenience.

- Decision: The car does not increase speed and recalculates the route.

- Outcome: Safety has absolute priority, even if inconvenient to the user.

Three filters of predictable decision-making

Every command passes through three levels of verification:

- Context — environment, robot state, event history

- Criticality — how dangerous the action would be

- Consequences — what will change if the command is executed or refused

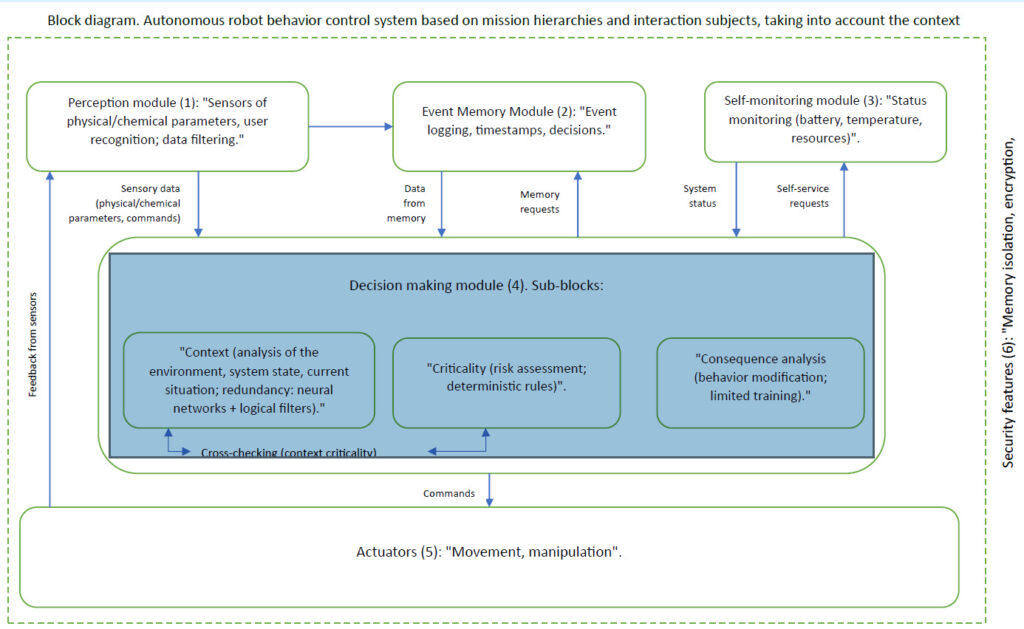

If any filter raises an alarm, the decision is reconsidered. Technically, the architecture is implemented according to the block diagram below:

A control architecture to address robot reactivity. (Click here to enlarge.) Source: Zhengis Tileubay

Legal aspect: Neutral-autonomous status

We went beyond technical architecture and propose a new legal model. For precise understanding, it must be described in formal legal language. “Neutral-autonomous status” of AI and AI-powered autonomous systems is a legally recognized category in which such systems are regarded neither as objects of traditional legal responsibility like tools, nor as subjects of law, like natural or legal persons.

This status introduces a new legal category that eliminates uncertainty in AI regulation and avoids extreme approaches to defining its legal nature. Modern legal systems operate with two main categories:

- Subjects of law — natural and legal persons with rights and obligations

- Objects of law — things, tools, property, and intangible assets controlled by subjects

AI and autonomous systems do not fit either category. If considered objects, all responsibility falls entirely on developers and owners, exposing them to excessive legal risks. If considered subjects, they face a fundamental problem: lack of legal capacity, intent, and the ability to assume obligations.

Thus, a third category is necessary to establish a balanced framework for responsibility and liability—neutral-autonomous status.

Legal mechanisms of neutral-autonomous status

The core principle is that each AI or autonomous system must be assigned clearly defined missions that set its purpose, scope of autonomy, and legal framework of responsibility. Missions serve as a legal boundary that limits the actions of AI and determines responsibility distribution.

Courts and regulators should evaluate the behavior of autonomous systems based on their assigned missions, ensuring structured accountability. Developers and owners are responsible only within the missions assigned. If the system acts outside them, liability is determined by the specific circumstances of deviation.

Users who intentionally exploit systems beyond their designated tasks may face increased liability.

In cases of unforeseen behavior, when actions remain within assigned missions, a mechanism of mitigated responsibility applies. Developers and owners are shielded from full liability if the system operates within its defined parameters and missions. Users benefit from mitigated responsibility if they used the system in good faith and did not contribute to the anomaly.

Hypothetical example

An autonomous vehicle hits a pedestrian who suddenly runs onto the highway outside a crosswalk. The system’s missions: “ensure safe delivery of passengers under traffic laws” and “avoid collisions within the system’s technical capabilities” by detecting the distance sufficient for safe braking.

An injured party demands $10 million from the self-driving car manufacturer.

Scenario 1: Compliance with missions. The pedestrian appeared 11 m ahead (0.5 seconds at 80 km/h or 50 mph)—beyond safe braking distance of about 40 m (131.2 ft.). The car began braking but could not stop in time. The court rules that the automaker was within mission compliance, so it reduced liability to $500,000, with partial fault assigned to the pedestrian. Savings: $9.5 million.

Scenario 2: Mission calibration error. At night, due to a camera calibration error, the car misclassified the pedestrian as a static object, delaying braking by 0.3 seconds. This time, the carmaker is liable for misconfiguration—$5 million, but not $10 million, thanks to the status definition.

Scenario 3: Mission violation by user. The owner directed the car into a prohibited construction zone, ignoring warnings. Full liability of $10 million falls on the owner. The autonomous vehicle company is shielded since missions were violated.

This example shows how neutral-autonomous status structures liability, protecting developers and users depending on circumstances.

Neutral-autonomous status offers business, regulatory benefits

With the implementation of neutral-autonomous status, legal risks are reduced. Developers are protected from unjustified lawsuits tied to system behavior, and users can rely on predictable responsibility frameworks.

Regulators would gain a structured legal foundation, reducing inconsistency in rulings. Legal disputes involving AI would shift from arbitrary precedent to a unified framework. A new classification system for AI autonomy levels and mission complexity could emerge.

Companies adopting neutral status early can lower legal risks and manage AI systems more effectively. Developers would gain greater freedom to test and deploy systems within legally recognized parameters. Businesses could position themselves as ethical leaders, enhancing reputation and competitiveness.

In addition, governments would obtain a balanced regulatory tool, maintaining innovation while protecting society.

Why predictable robot behavior matters

We are on the threshold of mass deployment of humanoid robots and autonomous vehicles. If we fail to establish robust technical and legal foundations today, tomorrow, the risks may outweigh the benefits—and public trust in robotics could be undermined.

An architecture built on mission and subject hierarchies, combined with neutral-autonomous status, is the foundation upon which the next stage of predictable robotics can safely be developed.

This architecture has already been described in a patent application. We are ready for pilot collaborations with manufacturers of humanoid robots, autonomous vehicles, and other autonomous systems.

Editor’s note: RoboBusiness 2025, which will be on Oct. 15 and 16 in Santa Clara, Calif., will feature session tracks on physical AI, enabling technologies, humanoids, field robots, design and development, and business best practices. Registration is now open.

About the author

Zhengis Tileubay is an independent researcher from the Republic of Kazakhstan working on issues related to the interaction between humans, autonomous systems, and artificial intelligence. His work is focused on developing safe architectures for robot behavior control and proposing new legal approaches to the status of autonomous technologies.

In the course of his research, Tileubay developed a behavior control architecture based on a hierarchy of missions and interacting subjects. He has also proposed the concept of the “neutral-autonomous status.”

Tileubay has filed a patent application for this architecture entitled “Autonomous Robot Behavior Control System Based on Hierarchies of Missions and Interaction Subjects, with Context Awareness” with the Patent Office of the Republic of Kazakhstan.