Agriculture is undergoing a fundamental transformation. For decades, farmers have relied on chemical herbicides to manage weeds, but rising resistance, tightening regulations, and growing environmental pressure are forcing the industry to rethink its approach. Enter laser-based weeding, a technology that replaces chemistry with physics, using precisely directed beams of light to destroy unwanted plants at their source.

The appeal is obvious. No residue, no soil contamination, no herbicide-resistant superweeds. But the promise of laser weed control is only as good as the intelligence guiding each shot. These systems must make decisions in milliseconds, distinguishing a wheat seedling from a grass weed with enough certainty to fire a high-powered laser within 0.5 cm of a crop. That level of precision demands more than rough detection. It demands pixel-perfect semantic segmentation, the ability to classify every individual pixel in a field image and know, with surgical accuracy, exactly where each plant begins and ends.

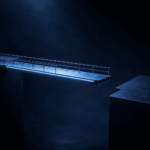

Bounding boxes, the workhorse of general object detection, simply cannot meet this bar. Understanding why requires a closer look at what happens when lasers operate in the field.

Why Bounding Boxes Fall Short in the Field

A bounding box is a rectangle drawn around an object of interest. For many computer vision tasks, that is enough. Identifying a car in traffic, flagging a face in a photo, or counting pallets in a warehouse all work well with rectangular annotations.

Fields do not cooperate with rectangles. Crops and weeds grow in irregular, overlapping patterns. Their leaves twist, curl, and interlock. In early growth stages, weed seedlings frequently emerge within crop rows, pressing against or sliding under the leaves of the very plants they threaten. A bounding box drawn around a weed in this scenario inevitably captures portions of the surrounding crop. If a laser relies on box coordinates to determine where to fire, the margin for collateral damage is unacceptably wide.

![]()

There is also a deeper limitation. Bounding boxes answer the question of where something roughly exists. They do not answer the question of what the model actually understands about a plant’s structure. Two overlapping leaves from different species can share the same bounding box, leaving the system with no reliable way to decide which pixels belong to the weed and which belong to the crop it must protect. For a laser targeting the meristem, the growth point located at the base of the stem or the crown of the root, this ambiguity is not a software inconvenience. It is a failure condition.

The Strategic Advantage of Pixel-Perfect Semantic Segmentation for Robotics

Pixel-perfect semantic segmentation resolves this ambiguity at the most fundamental level. Rather than drawing a box, segmentation classifies every individual pixel in an image with a specific label, crop, weed, soil, or otherwise. The result is a dense, boundary-accurate map of the scene that reflects the true geometry of each plant, including where it overlaps with its neighbors.

For autonomous robotic systems, this granularity is what separates a prototype from a production-ready tool. When every individual pixel carries a confirmed classification, the laser guidance system has the structural information it needs to locate the meristem with confidence, even when leaves from adjacent plants are touching. The model does not estimate. It knows, at the pixel level, precisely where the weed ends and the crop begins.

![]()

This precision is measured using Mean Intersection Over Union, or Mean IoU, the standard benchmark for evaluating segmentation models. Mean IoU quantifies the overlap between the model’s predicted boundary and the actual physical boundary of each plant. A high Mean IoU score means the predicted laser path closely matches the real contour of the weed, which is precisely the guarantee that laser-based weeding systems need before they can operate within 0.5 cm of a living crop. iMerit’s annotation pipelines are designed to produce training data that supports high Mean IoU across diverse field conditions, ensuring that model performance on benchmark datasets translates to reliable targeting in the field.

High-resolution RGB imagery, augmented by vegetation indices used as additional engineered input channels, strengthens this further. Excess Green, or ExG, amplifies the green reflectance that distinguishes living vegetation from bare soil, while the Color Index of Vegetation Extraction, known as CIVE, provides a secondary signal calibrated specifically for separating plant material under variable lighting conditions. Together, these engineered channels give segmentation models richer spectral information than RGB alone can supply, improving the model’s ability to separate vegetation from soil in low-contrast scenes and to distinguish weed leaves from crop leaves even when the two share similar blade shapes or growth postures.

The result is the gold standard of targeting data, a per-pixel blueprint that gives laser weed control the reliability it needs to operate across the full diversity of real growing conditions.

The Data Bottleneck: Why Quality Labels Are Hard to Scale

The challenge is that producing this level of annotation is genuinely difficult. Pixel-level labeling takes roughly ten times longer than drawing bounding boxes. Annotators must trace the precise outline of each leaf, account for occlusion, and make species-level decisions about plants that, in early growth stages, look nearly identical. A grass weed growing among wheat seedlings may share the same narrow blade shape, the same pale green color, and the same growth posture. Misclassifying it costs nothing at the annotation stage but cascades into model errors that a laser acts on at full power.

This is where the concept of high-quality ground truth becomes decisive. A segmentation model is only as reliable as the data it was trained on. Annotations that are even slightly imprecise, outlines that drift by a few pixels, labels that guess on ambiguous cases, or species identifications that lack domain knowledge, all of these errors compound during training. The model learns the approximation, not the reality. The consequence shows up directly in Mean IoU. A model trained on imprecise labels will produce boundary predictions that diverge from the plant’s true edge, and in precision agriculture, that divergence is the difference between a dead weed and a scorched crop.

Scaling annotation while maintaining accuracy is not a problem that speed alone can solve. Volume without quality produces high-quality ground truth in name only. What the industry needs is a pipeline that combines automated efficiency with genuine human expertise, and applies that expertise precisely where the data is hardest.

How iMerit Solves the Precision Gap

iMerit has built its annotation capabilities around this exact requirement. The company fields a workforce of more than 500 trained labelers and quality assurance specialists, many with domain-specific backgrounds relevant to agricultural and life sciences applications. This team does not simply draw outlines. It brings botanical knowledge to species disambiguation, understands the structural cues that separate a weed’s crown from a crop’s cotyledon, and applies consistent judgment to the edge cases that automated systems consistently get wrong.

Efficiency is built into the process from the start. iMerit’s purpose-built tooling, including the Ango Hub platform, uses integrated machine learning models to generate pre-labels automatically, giving annotators a starting point rather than a blank canvas. The human-in-the-loop workflow then takes over, with expert reviewers refining, correcting, and validating each annotation before it enters the training pipeline. This human-in-the-loop approach is particularly valuable for the cases that matter most in the field, overlapping leaves, dense canopy sections, and species pairs that look almost identical at the seedling stage.

This human-in-the-loop refinement ensures pixel-level accuracy in complex field conditions.

Weeding robots collect field data through multiple sensors; and every data stream requires annotated training data before the robot can act on it. iMerit supports this full robotics data pipeline, from pixel-level 2D crop and weed segmentation to 3D Point Cloud annotation that gives robots spatial awareness of plant structure, crop row geometry, terrain depth, and fallow ground.

Using iMerit’s 3D PCT workflows, robotic vision systems learn to interpret field scenes not just as flat images but as three-dimensional environments, enabling more precise weed localization, safer navigation between crop rows, and reliable targeting even in dense or overlapping vegetation. Explore iMerit’s weeding robotics annotation capabilities

![]()

Real-time performance adds another layer of urgency to this quality requirement. Laser weed control systems deployed on autonomous field robots must run inference at 20 or more frames per second, completing a full segmentation pass in approximately 12 milliseconds on edge hardware such as the NVIDIA Xavier. At that speed, there is no opportunity for a second pass or a correction cycle. The model either has the boundary right or it does not. Training on high-quality ground truth from iMerit’s annotation pipeline is what gives these models the accuracy needed to sustain reliable targeting at inference speeds that field operations demand.

The combination delivers measurable results. By pairing automated pre-labeling with human-in-the-loop quality control, iMerit produces high-precision segmentation data at scale. Customizable data pipelines support synthetic data augmentation, multi-class labeling for diverse weed species, and iterative model feedback loops, all structured to meet the demands of laser-based weeding and precision agriculture systems.

For companies building the next generation of autonomous weeding robots, iMerit functions as a primary data annotation partner capable of scaling labeled data production without compromising the boundary accuracy that Mean IoU demands.

Quick Reference: iMerit Agricultural AI Capabilities

| Feature | Metric/Capability |

| Annotation Accuracy | 95% accuracy in plant semantic segmentation |

| Data Volume | 10 million+ agricultural images labeled |

| Workforce Expertise | 500+ trained data labelers and QA experts |

| Annotation Speed | Pixel-level labeling takes 10x longer than bounding boxes, requiring professional scaling |

| Targeting Precision | Lasers fire within 0.5 cm of crop seedlings without damage |

Conclusion: Precise Data, Sustainable Yields

Laser-based weeding is ready to replace herbicides on a commercial scale, but its reliability in the field depends entirely on the quality of the data used to train its perception models. Bounding boxes describe proximity. Pixel-perfect semantic segmentation describes reality, every leaf boundary, every overlap, every meristem location mapped with the accuracy that a laser demands. Vegetation indices like ExG and CIVE extend that accuracy into challenging lighting conditions, while Mean IoU provides the objective benchmark that confirms model predictions match the physical world.

iMerit bridges the distance between raw field imagery and field-ready autonomous systems. By combining expert annotation, intelligent pre-labeling, human-in-the-loop quality assurance, and deep experience across more than 10 million agricultural images, iMerit produces the pixel-precise training data that transforms laser hardware from an impressive demonstration into a dependable tool for sustainable farming. When precision agriculture companies need data that performs at 20 frames per second in conditions as messy and complex as a real growing field, that is the standard iMerit delivers.