The world of 3D data representation offers AI developers two fundamental approaches for capturing spatial information: point clouds and meshes. While both methods enable machines to interpret three-dimensional environments, they differ significantly in structure, computational requirements, and application suitability. For AI model developers working with computer vision applications, choosing between these representations can dramatically impact model performance, training efficiency, and deployment success.

What Is a 3D Point Cloud?

A 3D point cloud represents spatial data as a collection of discrete points positioned in three-dimensional space, each defined by X, Y, and Z coordinates. These points can also carry additional attributes such as color, intensity measurements, or timestamp information, depending on the acquisition method. Point clouds capture the raw geometric essence of objects and environments without making assumptions about connectivity or surface continuity.

Each point exists independently, requiring no knowledge of neighboring points for basic operations. This independence makes point clouds particularly suitable for representing complex geometries, irregular surfaces, and environments with varying densities of spatial information. LiDAR sensors, depth cameras, and photogrammetry systems naturally produce point cloud data, making this format the direct output of many 3D sensing technologies.

Point clouds excel at preserving fine-grained spatial details and maintaining measurement accuracy from source sensors. They can represent both dense urban environments and sparse outdoor scenes with equal fidelity, adapting naturally to the varying information density captured by different sensing modalities.

What Is a 3D Mesh?

A 3D mesh constructs surfaces by connecting points through geometric primitives, typically triangles or polygons, creating a continuous representation of object boundaries. Unlike point clouds, meshes explicitly define the relationships between vertices through edges and faces, establishing topology that describes how surfaces connect and form closed volumes.

The mesh structure consists of vertices (points in 3D space), edges (connections between vertices), and faces (polygonal surfaces bounded by edges). This organization allows meshes to efficiently represent smooth surfaces using fewer data points than equivalent point cloud representations. The polygonal faces interpolate between vertices, creating continuous surfaces that can be rendered, analyzed, and manipulated using established computer graphics algorithms.

Meshes naturally support operations like surface normal computation, volume calculation, and collision detection. They can represent both simple geometric shapes and complex organic forms with high visual fidelity, making them the preferred format for computer graphics, 3D printing, and applications requiring surface-based analysis.

Key Differences Between 3D Mesh vs Point Cloud

Data Structure and Representation

Point clouds resemble scattered dots in space, with each dot representing a precise location but having no connection to its neighbors. Meshes function like connect-the-dots puzzles, where lines and triangular faces link individual points together to form complete surfaces. This fundamental difference means point clouds preserve raw measurements exactly as sensors captured them, while meshes create continuous surfaces by filling in the gaps between points.

Computational Complexity

Point cloud processing resembles analyzing a pile of loose puzzle pieces—algorithms must figure out which pieces belong together and how they relate spatially. Mesh processing works more like an assembled puzzle, where relationships are already defined. Point cloud algorithms spend time discovering connections between nearby points, while mesh algorithms can immediately follow established connections to perform operations more efficiently.

Storage and Memory Requirements

Point clouds store data more simply—just the coordinates and properties of each individual point. Meshes need extra information to track how points connect, requiring more storage space. However, meshes can often represent smooth surfaces with fewer total points than point clouds, potentially using less memory overall for objects with simple shapes.

Visualization and Rendering

Point clouds appear as clouds of individual dots on screen, requiring special techniques to help viewers understand the underlying surface. Meshes display solid, continuous surfaces that immediately show object shape and can be rendered with realistic lighting, shadows, and textures using standard graphics software.

Geometric Operations

Point clouds excel at maintaining exact measurements and handling irregular shapes, but need specialized tools for tasks like calculating surface area or volume. Meshes make these calculations straightforward since they already define complete surfaces. The choice depends on whether applications need measurement precision or efficient geometric calculations.

When to Use Mesh vs Point Cloud

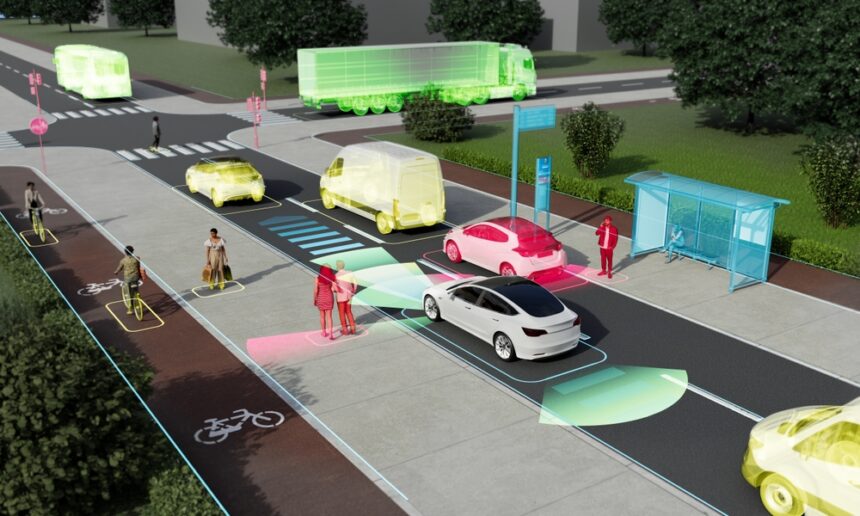

Point clouds excel in data precision and spatial accuracy, making them ideal for fine-tuning models that need high-resolution surface details. Industries like autonomous vehicles rely on point cloud data for precise object detection and spatial mapping, while agriculture applications use point clouds for crop monitoring and yield estimation. Machine learning models trained on point cloud data can leverage the direct spatial relationships captured by sensing systems without losing measurement fidelity.

Meshes work better for applications requiring continuous surface representation and real-time rendering. However, point clouds remain the preferred choice for AI model training where spatial precision and measurement accuracy drive performance improvements.

Navigating 3D Point Cloud Annotation with iMerit

Transforming raw point cloud data into training-ready datasets takes specialized expertise and scalable annotation workflows. iMerit’s comprehensive 3D point cloud annotation services bridge the gap between raw sensor data and production-ready AI models. From semantic annotation to 3D cuboid/box annotation, landmark annotation, polygon annotation, and polyline annotation, our solution unifies automation, human domain experts, and analytics to deliver high-quality annotated datasets that accelerate model development across computer vision applications.

Ready to accelerate your 3D AI development? Contact our experts today to discuss how our 3D point cloud annotation services can support your organization’s computer vision goals!

References:

https://imerit.net/solutions/computer-vision/3d-point-cloud-annotation-services/

https://imerit.net/resources/blog/what-is-a-3d-point-cloud-a-beginners-guide/

https://imerit.net/domains/autonomous-vehicles-old/3d-sensor-fusion-point-cloud-lidar-annotation/

https://imerit.net/resources/case-studies/lidar-annotation-for-a-leading-autonomous-vehicle-company/

https://imerit.net/contact-us/